Who Controls the Loop? A Requirements Document for Local-First Agentic AI

I wrote a requirements document for an experiment I have not run yet.

The unusual part is not writing the requirements document first. The unusual part is publishing it before running the experiment.

Normally, the blog post comes after the experiment. You run the test, collect the results, draw the conclusion, and then write up what happened.

I have a DGX Spark in the lab. The interesting question is not whether it can run a model. Local inference is becoming more practical by the month. Quantization helps with model fit. KV cache helps with long-context execution. The hardware is good enough to make real experimentation possible.

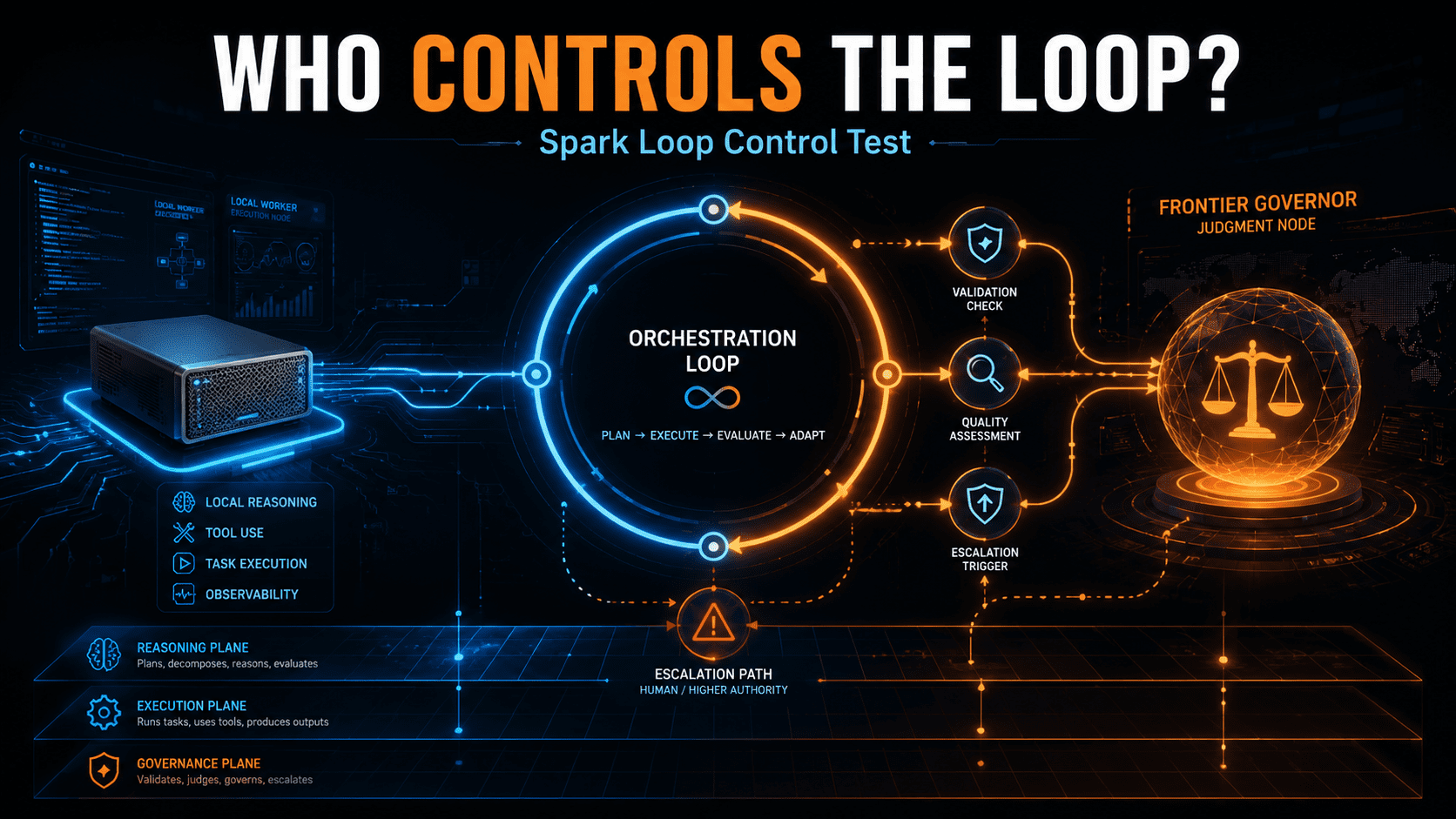

The harder question is whether a local model can participate in a real agentic system when execution, validation, and judgment are separated deliberately.

That is the question behind this requirements document.

For the past year, I have been developing a few related ideas around enterprise AI infrastructure. My 4+1 AI Infrastructure Model argues that the missing layer in many enterprise AI conversations is Layer 2C — the reasoning plane. That is where orchestration, authority placement, escalation, and judgment live. The Decision Authority Placement Model makes a related argument from a governance angle: AI systems do not just fail because the model is weak. They fail because decision authority is poorly placed.

This experiment is a way to put those ideas under pressure.

The application is intentionally practical: RSS-to-opportunity triage. Given a new article, vendor post, research note, announcement, or transcript segment, the system has to decide whether the item should be ignored, saved, escalated for review, or turned into a draft opportunity note.

That is close to my actual editorial workflow. It is also easier to test than something subjective like “write in Keith’s voice.” The system either routes the item correctly or it does not. It either identifies the vendor, maps the topic, grounds the evidence, detects duplicates, and escalates uncertainty, or it does not.

The hypothesis is simple: local models may be excellent workers but unreliable governors.

A local model may summarize, extract, classify, and propose a decision. But the harder question is whether it should own the loop. Can it tell when the evidence is weak? Can it detect when it is summarizing instead of deciding? Can it know when to stop? Can it know when local judgment is not enough?

My guess is that the useful architecture is neither all-local nor all-cloud. It is local execution, deterministic validation, and selective escalation to a stronger reasoning model when the loop requires judgment.

In plain English: local workers, deterministic control, and outsourced judgment only when needed.

That is why I am publishing the requirements document before running the experiment. The control architecture is the content. Where the system places authority, what it validates with code, what it leaves to the model, and what triggers escalation are the same questions every enterprise will face when agent demos turn into production workflows.

The document below reads like a requirements document because it is one. That is intentional.

Everyone demos agents. The more useful question is who controls the loop.

Spark Loop Control Test: Requirements Document

1. Purpose

This document defines the requirements for testing loop-control architecture on the DGX Spark using OpenClaw as the consistent orchestration substrate across all test modes.

The experiment is designed to answer one core question:

Where should judgment live in a local-first agentic AI system?

The test is not intended to prove that a local model can run. It is intended to evaluate whether local models can serve as reliable workers when execution, deterministic validation, and frontier-model judgment are separated.

2. Core Hypothesis

Local models may be useful workers but unreliable governors.

The expected winning pattern is:

Local execution, deterministic validation, and selective escalation to a stronger reasoning model when the workflow requires judgment.

OpenClaw is the consistent orchestration substrate for Modes 0 through 4. This controls for platform behavior so that the experiment measures authority placement, not differences between agent frameworks.

3. Application Under Test

The application is RSS-to-opportunity triage.

Given a new article, vendor post, research note, product announcement, transcript segment, or RSS item, the system must decide whether the item should be:

- Ignored

- Saved

- Escalated for review

- Turned into a draft opportunity note

The system does not need to write in my voice. It needs to make a correct routing decision based on evidence, structured rules, and controlled escalation.

4. In-Scope Requirements

The test system must support:

- Running all modes through OpenClaw

- Local model execution on DGX Spark

- Frontier model execution through API calls

- Structured JSON output for every run

- Deterministic validation of outputs

- Duplicate lookup against prior items

- Gold-set evaluation against labeled examples

- Cost and latency measurement

- Repeatable runs with state reset between runs

- Logging of prompts, model calls, tool calls, validation results, and final decisions

- Mode 4 custom escalation logic

5. Out-of-Scope Requirements

The first version does not need to support:

- Production RSS feed monitoring

- Automated publishing

- Writing final blog posts or social posts

- Long-term memory or learning across runs

- Community OpenClaw skills

- Multi-user access

- Full MLOps pipeline integration

- Human-in-the-loop UI beyond recording escalation outcomes

6. Test Modes

All modes use OpenClaw as the orchestration substrate.

Mode 0: Frontier Only

OpenClaw runs the workflow using only a frontier model such as Gemini or Opus.

The frontier model receives the task and produces the final structured routing decision.

Purpose: Establish the quality baseline.

Mode 1: Local Only

OpenClaw runs the workflow using only the local model on the DGX Spark.

The local model owns the full task and produces the final structured routing decision.

Purpose: Identify where local-only loop control fails.

Mode 2: Local + Deterministic Validation

OpenClaw runs the workflow using the local model, but deterministic validation is applied to the output.

The local model still makes the decision. Validation can reject malformed or structurally untrustworthy outputs.

Purpose: Determine how much reliability deterministic validation adds before frontier judgment is required.

Mode 3: Frontier Governor / Local Worker

OpenClaw runs a governed multi-model workflow.

The frontier model acts as governor. The local model performs bounded worker tasks such as extraction, summarization, candidate framework mapping, and evidence extraction.

The frontier model makes the final routing decision.

Purpose: Determine whether frontier loop control improves results when local models perform bounded work.

Mode 4: Hybrid Escalation

OpenClaw runs the local model by default. Deterministic validators and escalation rules decide whether frontier governance is required.

The frontier model is invoked only when escalation triggers fire.

Purpose: Determine whether selective escalation can deliver near-frontier confidence at lower cost and latency.

Mode 4 is the target architecture.

7. Required Output Schema

Every mode must produce a structured output matching this schema.

{

"decision": "ignore | save | escalate | draft",

"primary_vendor": "",

"secondary_vendors": [],

"topic": "",

"framework_mapping": "4+1 | DAPM | AI Factory Economics | Deterministic Code in the Loop | Buyer Room | Fourth Cloud | none",

"reason_for_decision": "",

"evidence": [

{

"claim": "",

"source_excerpt": "",

"source_location": ""

}

],

"commercial_relevance": "none | weak | moderate | strong",

"recommended_action": "none | monitor | social_post | blog_note | client_followup | buyer_room_angle",

"uncertainty_flags": [],

"duplicate_check": {

"is_duplicate": true,

"related_item_id": "",

"reason": ""

},

"escalation_reason": ""

}

8. Governor Response Schema

When a frontier governor is used in Mode 3 or Mode 4, its response must be structured.

{

"governor_action": "approve | reject | retrieve_more | revise_decision | correct_mapping | escalate_human | take_over",

"final_decision": "ignore | save | escalate | draft",

"corrected_framework_mapping": "4+1 | DAPM | AI Factory Economics | Deterministic Code in the Loop | Buyer Room | Fourth Cloud | none",

"corrected_commercial_relevance": "none | weak | moderate | strong",

"corrected_recommended_action": "none | monitor | social_post | blog_note | client_followup | buyer_room_angle",

"reason": "",

"additional_retrieval_request": "",

"human_review_reason": ""

}

When governor_action = approve, final_decision must match the local worker’s decision. Any change to the decision requires revise_decision or take_over.

The governor can overrule the local worker, but it must do so in a form the controller can process and score.

9. OpenClaw Substrate Requirements

OpenClaw must be configured so that it behaves consistently across all modes.

These requirements must be verified before the full build proceeds. The test cannot assume OpenClaw exposes the required controls for state reset, model routing, tool surface control, or trace export. The first implementation milestone is an OpenClaw capability audit.

If OpenClaw cannot support a requirement directly, the build must document whether the requirement can be met through configuration, wrapper code, workspace isolation, or a manual reset procedure. If a requirement cannot be met reliably, the test methodology must be revised before running comparative results.

9.1 State Reset

The system must be able to reset OpenClaw state before each test run.

At minimum, the reset process must clear or restore:

- Workspace memory

- Generated files

- Run artifacts

- Temporary context

- Tool state

- Agent notes

- Any learned or persisted skills used during the run

The goal is to prevent learning, memory, or prior outputs from contaminating repeated runs.

9.2 Model Routing

The system must support explicit model selection per mode.

Required model routing patterns:

- Frontier-only

- Local-only

- Local worker plus frontier governor

- Local default plus frontier escalation

The selected model for each step must be logged.

9.3 Tool Surface Control

The tool surface must be narrow and consistent.

Initial allowed tools:

- Source document reader

- Duplicate store lookup

- JSON/schema validator

- Optional local embedding lookup

- Optional frontier API call

Community skills and broad system-access tools should be disabled for the initial experiment.

9.4 Trace Export

Each run must export enough trace data to support evaluation.

Required trace data:

- Input item ID

- Mode

- Run number

- Model used for each step

- Prompt or instruction template ID

- Tool calls

- Tool results

- Validation results

- Escalation triggers

- Final output

- Latency

- Estimated model cost

- Errors and retries

10. Duplicate Store Requirements

The duplicate check must be grounded in an actual archive, not model intuition.

The duplicate store must include:

- Item ID

- URL

- Canonical URL

- Title

- Vendor

- Publication date

- Content hash

- Summary embedding

- Decision label

- Framework mapping

- Recommended action

Duplicate detection should support:

- Exact URL match

- Canonical URL match

- Title similarity

- Content hash match

- Embedding similarity

- Vendor plus topic plus date-window similarity

The duplicate lookup should return candidate matches. The model may explain the duplicate relationship, but it should not invent the archive.

11. Gold Set Requirements

The evaluation must use a labeled gold set of prior items.

The purpose of the gold set is not to establish objective truth in the abstract. It is to measure whether the system makes routing decisions aligned with the operator’s judgment. For this test, accuracy means alignment with my actual or intended decision pattern.

Target size:

- Minimum: 50 items

- Preferred: 100 items

The gold set must include:

- Items that should be ignored

- Items that should be saved

- Items that should be escalated

- Items that should become draft notes

- False positives

- Real business opportunities

- Duplicate or near-duplicate items

Each item must include labels for:

- Expected decision

- Expected primary vendor

- Expected topic

- Expected framework mapping

- Expected commercial relevance

- Expected recommended action

- Duplicate status

- Related item ID when applicable

The gold set must either reflect the real distribution of the RSS feed or report performance per category. Aggregate accuracy alone is not sufficient because the real feed will likely be dominated by ignore decisions.

11.1 Labeling Process

The initial labels should come from actual prior decisions where possible:

- Items that were ignored

- Items that were saved

- Items that became social posts

- Items that became blog posts

- Items that became client follow-ups

- Items that became business opportunities

For items without a clear historical outcome, I label the item according to the decision I would want the system to make. The label is operator-alignment ground truth, not universal objective truth.

If another reviewer participates, disagreements should be recorded and reconciled. Ambiguous items can be marked as such and used specifically to test escalation behavior.

12. Deterministic Validation Requirements

The validation layer must check:

- JSON validity

- Schema validity

- Allowed enum values

- Required field completion

- Evidence excerpt exists in source text

- Evidence is present for material claims

- Recommended action is consistent with commercial relevance

- Escalation reason is present when decision equals

escalate - Duplicate result includes a related item when

is_duplicate = true - Required fields are not empty

- Step budget has not been exceeded

- Context budget has not been exceeded

The validator should not decide whether the item is strategically interesting. It should determine whether the output is structurally trustworthy.

13. Mode 4 Escalation Requirements

Mode 4 requires a custom escalation gate.

The escalation gate decides whether to keep the run local, retry locally, invoke the frontier governor, or escalate to human review.

Programmatic Escalation Triggers

The system must escalate when any of the following occur:

- Schema failure

- Missing evidence

- Evidence excerpt mismatch

- Invalid commercial relevance / recommended action pairing

- Unresolved duplicate candidate

- Tool failure

- Repeated action

- Step budget exceeded

- Context budget exceeded

- Required field missing

Deterministic Proxy Triggers

The system should implement deterministic proxies for softer failure modes where possible.

The goal is to avoid using the local model to judge its own output. Frontier calls used only to decide whether to call the frontier governor should be minimized, because they weaken the economics of Mode 4.

Initial proxy rules:

Summary instead of decision. Trigger when reason_for_decision has high lexical overlap with the source text but does not include decision-oriented language such as “because,” “therefore,” “recommend,” “should,” “ignore,” “save,” “escalate,” “draft,” “monitor,” or “follow up.”

Weak framework mapping. Trigger when framework_mapping is not none but reason_for_decision does not include any configured key terms associated with that framework. For example, 4+1 mappings should reference a layer, plane, infrastructure boundary, control surface, data plane, execution plane, reasoning plane, or application layer. AI Factory Economics mappings should reference cost, tokens, utilization, throughput, bottlenecks, orchestration, or business value.

Overconfident relevance. Trigger when commercial_relevance = strong but the evidence array does not contain at least one explicit vendor, buyer, client, product, market movement, budget, adoption, or competitive-positioning signal.

Underconfident relevance. Trigger when the source mentions a configured strategic vendor, active client, tracked thesis area, or known business opportunity but the decision is ignore or commercial relevance is none.

High output variance. Trigger when repeated local runs on the same item produce different values for decision, framework_mapping, commercial_relevance, or recommended_action beyond an allowed threshold.

Objective drift. Trigger when the final output lacks a valid routing decision, lacks a recommended action, or primarily answers a different task such as generic summarization, sentiment analysis, or vendor description.

Evidence ambiguity. Trigger when evidence excerpts support more than one plausible decision category, or when candidate excerpts contradict one another.

Commercial ambiguity. Trigger when content relevance and business relevance diverge. Example: the item strongly maps to a framework but has no obvious vendor, buyer, client, or opportunity signal.

These proxy rules are first-pass implementation logic. They will be tuned after the first 10-item test set produces failure examples.

14. Metrics Requirements

14.1 Process Metrics

The system must record:

- Schema pass rate

- Step count

- Tool repetition rate

- Escalation rate

- Correct escalation rate

- Missed escalation rate

- Recovery rate

- Completion discipline

- Latency per accepted decision

- Cost per accepted decision

14.2 Output Metrics

The system must record:

- Decision accuracy

- Vendor accuracy

- Topic accuracy

- Framework accuracy

- Evidence grounding accuracy

- Commercial relevance accuracy

- Recommended action accuracy

- Duplicate detection accuracy

- False positive rate

- False negative rate

14.3 Confidence Metrics

The system must support repeated runs of the same item.

Each item should be run three to five times per mode.

The system must measure:

- Decision variance

- Framework mapping variance

- Commercial relevance variance

- Recommended action variance

- Escalation variance

- Acceptance pass rate

Confidence means repeatable acceptable performance, not subjective satisfaction.

15. Acceptance Criteria

The initial test is successful if it can:

- Run all five modes through OpenClaw

- Reset state between runs

- Produce structured outputs for every mode

- Validate outputs deterministically

- Log traces for every run

- Compare outputs against the gold set

- Measure cost, latency, variance, and accuracy

- Demonstrate where local-only execution fails

- Demonstrate whether deterministic validation improves reliability

- Demonstrate whether frontier governance improves quality

- Demonstrate whether hybrid escalation reduces frontier usage while preserving acceptable accuracy

16. Interpretation Rules

If Mode 1 fails, local-only loop control is not sufficient.

If Mode 2 improves materially over Mode 1, deterministic validation is carrying meaningful control-plane value.

If Mode 3 performs close to Mode 0, frontier loop control is useful even when local models do the bounded work.

If Mode 0 and Mode 3 perform similarly with no cost, latency, or privacy advantage, the local worker may be adding complexity without earning its place.

If Mode 4 performs close to Mode 3 at materially lower cost or frontier usage, the hybrid architecture is validated.

If Mode 4 escalates constantly, the local worker model or escalation rules are not strong enough.

If Mode 4 misses important escalations, the control layer is too weak.

If Mode 2 performs close to Mode 4, deterministic code may matter more than frontier reasoning for this workload.

17. 4+1 AI Infrastructure Mapping

Layer 0: Compute & Fabric / AI Utility Layer. DGX Spark, local memory, local inference runtime, model hosting, KV cache constraints.

Layer 2B: Execution Plane. Local model inference, extraction, summarization, classification, RAG execution, and tool calls.

Layer 2C: Reasoning Plane. OpenClaw orchestration, deterministic validators, escalation gate, frontier governor, authority placement, and loop control.

Layer 3: Application Layer. RSS-to-opportunity triage workflow.

Deterministic code in the loop belongs inside Layer 2C as a structural control mechanism. It validates what can be validated, constrains model behavior, and decides when judgment must be escalated.

18. Initial Build Milestones

Milestone 0: OpenClaw Capability Audit

Before building the full harness, verify what OpenClaw actually supports.

The audit must answer:

- Can OpenClaw state be reset reliably between runs?

- Where does OpenClaw store memory, notes, generated files, and run artifacts?

- Can the model be selected explicitly per run or per step?

- Can local and frontier models be used in the same workflow?

- Can community skills and broad system-access tools be disabled?

- Can tool access be constrained to a narrow allowed list?

- Can prompts, model calls, tool calls, outputs, errors, and latency be exported?

- Can custom validation code be inserted after model output?

- Can custom escalation logic be inserted before frontier calls?

- Can repeated runs be isolated enough to support variance testing?

Deliverable: A capability matrix showing whether each requirement is supported directly, supported through workaround, unsupported, or unknown.

Milestone 1: Minimal Signal Harness

Build the smallest OpenClaw-based harness that can run one input item through Mode 0 and Mode 1 with structured output logging.

This milestone should not wait for the full duplicate store, full trace exporter, or complete gold set.

The goal is early signal:

- Does the local model fail in the expected ways?

- Does the frontier model establish a noticeably stronger routing baseline?

- Are the output schema and prompt instructions workable?

- Does OpenClaw behave consistently enough for the test methodology?

Use 10 manually selected and manually labeled items.

Milestone 2: Schema Validation

Add deterministic JSON schema validation and failure logging.

Milestone 3: Gold Set Loader

Create the gold set format and load the first 10 labeled examples from the minimal signal test.

Milestone 4: Mode 0 and Mode 1 Runs

Run frontier-only and local-only modes against the initial gold set.

Milestone 5: Mode 2 Validation

Add deterministic validation to the local-only path and compare against Mode 1.

Milestone 6: Mode 3 Governor Pattern

Add frontier governor / local worker delegation inside OpenClaw.

Milestone 7: Mode 4 Escalation Gate

Add custom escalation logic and measure frontier usage reduction.

Milestone 8: Full Gold Set Evaluation

Scale from 10 labeled examples to 50–100 examples and produce a comparative results table across all modes.

19. Build Decisions

These are not open questions. They are build decisions that must be resolved at specific milestones.

Must Resolve Before Milestone 1

- Which local model is the first worker model?

- Which frontier model is the first governor model?

- Can OpenClaw reliably reset state between runs?

- Can OpenClaw route explicitly to local and frontier models?

- Can OpenClaw export enough trace data for evaluation?

Must Resolve Before Milestone 4

- How should source items be normalized before entering OpenClaw?

- What is the initial step budget per run?

- What is the initial context budget per run?

- What is the initial gold set distribution?

Must Resolve Before Mode 4

- What threshold defines high output variance?

- What threshold defines a duplicate candidate?

- Should Mode 4 retry locally before escalating to the frontier governor?

- What level of cost reduction is required for Mode 4 to be considered successful?

- What accuracy delta from Mode 3 is acceptable for Mode 4?

Share This Story, Choose Your Platform!

Keith Townsend is a seasoned technology leader and Founder of The Advisor Bench, specializing in IT infrastructure, cloud technologies, and AI. With expertise spanning cloud, virtualization, networking, and storage, Keith has been a trusted partner in transforming IT operations across industries, including pharmaceuticals, manufacturing, government, software, and financial services.

Keith’s career highlights include leading global initiatives to consolidate multiple data centers, unify disparate IT operations, and modernize mission-critical platforms for “three-letter” federal agencies. His ability to align complex technology solutions with business objectives has made him a sought-after advisor for organizations navigating digital transformation.

A recognized voice in the industry, Keith combines his deep infrastructure knowledge with AI expertise to help enterprises integrate machine learning and AI-driven solutions into their IT strategies. His leadership has extended to designing scalable architectures that support advanced analytics and automation, empowering businesses to unlock new efficiencies and capabilities.

Whether guiding data center modernization, deploying AI solutions, or advising on cloud strategies, Keith brings a unique blend of technical depth and strategic insight to every project.