Layer 1A Is Table Stakes. The Real AI Infrastructure Question Is Above It.

I run a production AI system on Google Cloud. Last year, I migrated it to an on-premises NVIDIA DGX Spark — not to leave Google, but to understand what leaving would require.

What I found: the data moved fine. The judgment didn’t.

What should have been a configuration change became a multi-layer architectural rebuild. Not because the storage was incompatible. Because the intelligence above storage — the retrieval logic, the context construction, the semantic relationships that determined what the model could reason over — was coupled to the platform.

I went back to Google. By choice. Not because the POC failed — it worked. But because it revealed something more important than portability: the layers above storage are where the real value lives, and rebuilding them from scratch is the actual cost of leaving a platform. For my workload, borrowing Google’s judgment at those layers was worth the coupling.

That was a deliberate decision. Most enterprises are making the same decision implicitly — without running the POC, without understanding what they are coupled to, and without knowing what would break if they tried to move.

And then this week, at Google Cloud Next, Google announced the Knowledge Catalog (formerly Dataplex Universal Catalog)— a managed semantic graph that maps how data across an entire organization relates to itself, across clouds, using Gemini to build the intelligence layer that determines what context reaches your models.

Google just productized the exact layer I discovered was non-portable.

I call this borrowed judgment. It is the lock-in vector that no portability checklist will ever catch. And it is the concept that should be at the center of every enterprise AI infrastructure decision in 2026.

The question borrowed judgment forces is simple: who owns the reasoning logic your AI system depends on — you, or the platform?

Three months ago, I published a stress test evaluating MinIO’s S3-compatible object storage claims against the 4+1 AI Infrastructure Model. The conclusion: S3 compatibility at the storage layer is necessary for portability but not sufficient for governance, operational readiness, or policy design at the layers above it.

That conclusion was correct. But the frame was too narrow.

The market has since made something obvious that was only implicit in January: being a Layer 1A storage company is no longer a viable position. Every major vendor knows it. And Google just showed everyone where the real game is — and what it costs to play.

The Market Moved. In the Same Direction.

Pure Storage rebranded to Everpure. VAST stopped calling itself a storage company and started calling itself an AI Operating System. MinIO shipped Iceberg tables, a Hugging Face-compatible model registry, and an NVIDIA STX integration. AWS deepened S3 Tables with Intelligent-Tiering, GovCloud support, and simplified IAM across storage and catalog.

And Google announced a cross-cloud knowledge catalog that builds a semantic graph across all your data — regardless of where it sits or who stores it.

Every major vendor in this space has reached the same conclusion: fast, reliable, portable storage — what I call Layer 1A in the 4+1 model — is assumed. It is not the value line.

So where is the value line?

What Enterprise AI Actually Needs From the Data Plane

Strip away the vendor positioning and ask the question that matters to a CTO running production AI:

Do the right embeddings reach the right GPUs at the right time to produce trustworthy inference?

That is the entire game. Not storage throughput. Not Iceberg compatibility. Not global namespace architecture.

Reduced hallucinations and better performance. In that order.

That priority ordering matters. An AI system that hallucinates fast is worse than an AI system that reasons correctly at moderate speed. The enterprise does not need maximum token throughput. It needs maximum trust in output — and then it needs that trust delivered at operational speed.

This is a pipeline-to-runtime problem — what I designate Layer 1C-to-Layer 2B in the 4+1 model I will explain below. Storage is the substrate underneath it. The value lives above it.

The 4+1 Model: Where the Value Actually Lives

A quick primer for readers encountering this framework for the first time.

The 4+1 AI Infrastructure Model maps the architectural layers between hardware and business applications in an enterprise AI system. I built it after migrating production AI workloads across environments and discovering that most AI failures get misdiagnosed — organizations lack shared vocabulary for where in the stack the problem actually lives. The model provides that vocabulary. The layer designations (1A, 1C, 2B, 2C) are references to specific architectural planes, not arbitrary labels.

Here is why each layer matters for this analysis:

Layer 0 — Foundation: compute, networking, GPUs. The hardware. Not the focus of this piece.

Layer 1A — Data Storage & Governance. This is where your data sits — object stores, file systems, databases. S3-compatible storage, Iceberg tables, the things vendors are competing on. In 2026, this is table stakes. Fast. Reliable. Portable. S3-compatible. Iceberg-capable. If your storage vendor cannot deliver these, they are not a serious option. Full stop. But these are qualifying criteria, not differentiators.

Layer 1B — Context Management & Retrieval. Vector databases, embedding stores, RAG retrieval. This is where stored data becomes searchable context for models. Layer 1B works in concert with Layer 1C — the pipeline feeds the retrieval system, and the retrieval system feeds the model.

Layer 1C — Data Movement & Pipelines. This is where data becomes context. Embeddings are created, transformed, and delivered to models here. The quality of your RAG pipeline, the freshness of your vector indices, the precision of your chunking strategy, the discipline of your metadata — all of this happens at Layer 1C. And all of it determines whether the model hallucinates or reasons. This is the layer that broke when I moved my system off Google Cloud. This is where the value lives.

Layer 2A — Infrastructure Orchestration. Kubernetes, cluster management, resource scheduling. The operational machinery that keeps the layers above it running. Critical, but largely commoditized — most enterprises consume this through managed services or established platforms.

Layer 2B — Application Runtime & Serving. This is where embeddings meet GPUs. Model serving, inference orchestration, runtime configuration. The wrong embedding delivered to the right GPU produces the same garbage as the right embedding delivered to no GPU at all.

Layer 2C — Agentic Infrastructure. This is the reasoning and policy plane — where governance determines what the AI system is allowed to do with its reasoning. I formalize this through the Decision Authority Placement Model (DAPM): who decides what the AI can access, what it can conclude, and what actions it can take?

Layer 3 (+1) — Applications. AI-powered business systems where value is realized. The “+1” because this layer is what everything else exists to serve.

The cascade runs: 1A stores the data. 1C transforms it into context. 2B serves it to models. 2C governs what happens next. 3 delivers value to the business.

If you watched the Google Cloud Next demos this week, most of what looked magical — agents pulling the right facts from scattered systems in real time — is Layer 1C and Layer 2C work. Not Layer 1A. Not Layer 0. The Knowledge Catalog is Google’s managed 1C; the GPUs and storage it rides on are table stakes.

Layer 1A is the foundation. It is not the differentiator. Vendors know this. That is why none of them want to be “just” a storage company anymore.

Four Vendors, Four Architectural Bets

Every major player is trying to own the layers above storage. They are doing it in fundamentally different ways — and each way carries a different borrowed judgment cost.

AWS S3 Tables — The Managed Default

AWS S3 Tables is not a storage product decision. It is a platform inheritance.

If you are running AI workloads in AWS, S3 Tables with Iceberg is the default Layer 1A. Governance integrates through IAM and Lake Formation. Catalog management flows through Glue. Query engines — Athena, Redshift, EMR — connect natively. Intelligent-Tiering handles cost optimization automatically. And vector retrieval is available through managed services — OpenSearch, Bedrock Knowledge Bases — that consume directly from S3. AWS gives you a managed path from Layer 1A storage through Layer 1B vector retrieval without leaving the platform.

The enterprise does not operate Layer 1A or 1B. AWS does.

AWS does not need to climb the stack. AWS is the stack. The enterprise gets operational simplicity at the cost of placement authority. Data lives where AWS puts it, governed how AWS governs it.

For workloads where sovereignty and cost predictability at sustained scale are not primary constraints, this is the path of least resistance. For workloads where they are, this is the Fourth Cloud trigger.

Borrowed judgment cost: moderate. AWS makes infrastructure placement decisions on your behalf. The semantic intelligence — how your data relates to itself, what context reaches your models — remains largely the enterprise’s responsibility to build on top of AWS services. You borrow AWS’s operational judgment. You still own the reasoning judgment. If you are fine inheriting AWS’s placement and governance defaults and owning your own semantic layer, that is a reasonable trade.

MinIO AIStor — The Portable Substrate

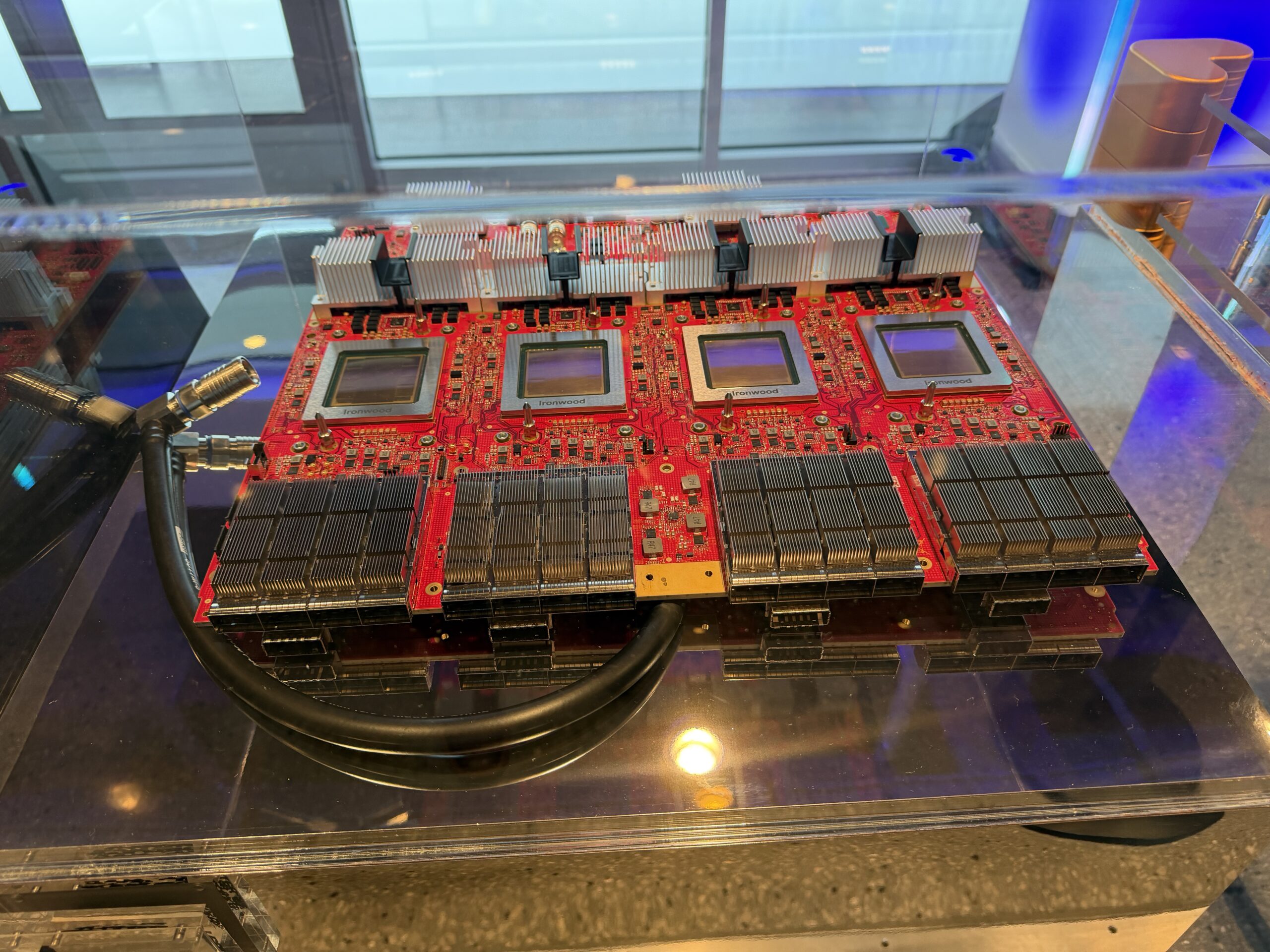

MinIO’s pitch has always been clear: S3-compatible, software-defined, runs anywhere. AIStor Tables adds Iceberg. MinIO has announced support for NVIDIA’s STX reference architecture, with AIStor running natively on BlueField-4 with GPUDirect RDMA (GA expected second half of 2026). AIHub adds a Hugging Face-compatible model registry at the storage layer.

MinIO gives enterprises placement authority — you decide where data lives, on what infrastructure, under what governance. That is real and valuable for Fourth Cloud architectures.

But there is a critical distinction to understand at Layer 1B: MinIO does not provide native vector search. AIStor is the storage backend for external vector databases — Milvus, Weaviate, LanceDB — which persist their embeddings and index files on AIStor via S3. The vector search itself runs in a separate system that the enterprise deploys and operates. This is a meaningful architectural difference from VAST, where vectors are a native data type inside the platform.

And here is MinIO’s broader strategic problem: portability is their primary differentiator, and they are no longer alone in claiming it. VAST runs across hyperscalers, neoclouds, and on-prem. Google’s cross-cloud lakehouse queries Iceberg data wherever it sits without moving it. Portability at Layer 1A is becoming as commoditized as the storage itself.

MinIO delivers the substrate. It does not deliver the operational wrapper. S3 compatibility is an API, not a governance model. Iceberg support is a table format, not a metadata discipline. AIHub is a model registry, not a model lifecycle platform. Every capability above Layer 1A — governance integration, pipeline orchestration, policy enforcement, Layer 2C reasoning — remains the enterprise’s responsibility.

Borrowed judgment cost: zero. MinIO makes no decisions about your data’s meaning, your retrieval logic, or your reasoning policies. You own everything. You build everything. For enterprises with the team and the discipline to design Layer 1C and Layer 2C, this is the cleanest foundation. For enterprises without that discipline, zero borrowed judgment is how projects stall at pilot.

VAST Data — The Platform Absorber

VAST is no longer a storage company. The AI Operating System absorbs Layer 1A (DataStore), Layer 1B (native vector search in the DataBase — vectors as a first-class data type alongside structured and unstructured data), Layer 1C (DataEngine, SyncEngine), Layer 2B (InsightEngine, AgentEngine), and Layer 2C (PolicyEngine, TuningEngine — both announced at VAST Forward 2026 for end-of-year release) — with Polaris as a global control plane orchestrating it all across hyperscalers, neoclouds, and on-prem.

The native vector capability matters. Where MinIO requires an external vector database like Milvus running on top of the storage layer, and AWS provides vector retrieval through separate managed services, VAST embeds vectors directly into its DataBase engine — queryable through the same interface, governed by the same policies, GPU-accelerated via NVIDIA cuVS. No separate system to deploy, no additional integration to maintain. For the “right embeddings to the right GPUs” problem, VAST has the most direct path from storage to retrieval in a single platform.

VAST runs on Google Cloud, Azure, AWS, CoreWeave, and on-premises. They have a $1.17 billion CoreWeave deal and a Google Cloud partnership. They run natively on BlueField-4 DPUs. DataSpace provides the global namespace. Polaris provides the intent-driven orchestration.

VAST makes no secret that they begin at petabyte scale. That is where their architecture shines and where their economics work. Not every problem is a petabyte problem. But for the enterprises operating at that scale — and those are the enterprises making the largest AI infrastructure investments — VAST’s integrated platform eliminates the stitching that MinIO leaves to the customer and eliminates the sovereignty constraints that AWS imposes.

VAST’s portability claim also neutralizes MinIO’s primary differentiator. If VAST runs everywhere through a software-defined model with a global namespace, then portability is no longer MinIO’s alone. VAST offers portability plus the operational wrapper and the layers above storage.

Borrowed judgment cost: high. When a single vendor absorbs Layers 1A through 2C, Layer 2C — the reasoning plane — becomes a vendor-controlled capability rather than an enterprise-designed one. PolicyEngine, TuningEngine, AgentEngine — these are not neutral infrastructure. They embed decisions about how agents reason, what data they access, and how models improve. The enterprise consumes those decisions rather than architecting them. If your differentiation is not in Layer 2C — if AI reasoning is a cost center, not a competitive weapon — delegating that layer to VAST may be exactly the right choice at petabyte scale. If your differentiation is in 2C, write down explicitly which authorities you have delegated and treat that as strategic risk, not a footnote.

Google Cloud Knowledge Catalog — Productizing the Non-Portable Layer

When I moved vCTOA off Google and onto DGX Spark, the thing that broke was not storage. It was the semantic layer that decided what my data meant and what reached the model. At Cloud Next this week, Google turned that exact layer into a product.

But the lakehouse is not the story. The Knowledge Catalog is.

The Knowledge Catalog takes data — structured tables, unstructured content in storage — processes it through Gemini, and creates a semantic graph. That graph maps how the data across your entire organization relates to itself. When a model or an agent needs context, the graph translates the request into precise data retrieval from the right sources, across clouds, without the enterprise having to build the retrieval logic manually.

Google announced a cross-cloud lakehouse alongside it — zero-copy Iceberg data access across AWS, Azure, GCP, and any source supporting the Iceberg format, no data movement required. But the lakehouse is plumbing. The Knowledge Catalog is the intelligence that rides on top of it.

In 4+1 terms: Google just built a managed Layer 1C capability that sits above everyone’s Layer 1A — including their competitors’. The Knowledge Catalog does not care whether your data sits in S3, in MinIO, in VAST, or in Google Cloud Storage. It builds the semantic intelligence on top of whatever substrate you have.

This is the most direct validation of the argument I have been making for months: Layer 1A is table stakes. The value is in the semantic layer that transforms data into context for inference.

It is also the most potent form of borrowed judgment in the market.

Borrowed judgment cost: very high — and the most consequential to understand.

The Knowledge Catalog does not just store your data or move your data. It decides what your data means. It builds the graph that determines how information across your organization connects. It makes the retrieval decisions that determine what context reaches your models. Those are not infrastructure decisions. Those are reasoning decisions.

I know this because I tested it. When I ran vCTOA on DGX Spark as a proof of concept, the retrieval pipeline, the semantic relationships, the context construction logic — all of it had to be rebuilt. I could move the data in an afternoon. Rebuilding the intelligence about my data took weeks. And the reason I went back to Google was precisely because the judgment I had borrowed was worth more than the portability I had proved.

The knowledge catalog makes that coupling more valuable, not less. Cross-cloud reach means the catalog can index data sitting anywhere. That is genuinely powerful for reducing hallucinations — better semantic understanding means better retrieval means more precise context means more trustworthy inference. For enterprises whose primary constraint is inference quality, this may be the most important announcement at Next.

But every enterprise that builds agents dependent on the Knowledge Catalog’s semantic graph is borrowing Google’s judgment about how their data relates to itself. That judgment is not portable. There is no S3-equivalent standard for semantic graphs. There is no Iceberg for Knowledge Catalogs. When the catalog becomes the foundation of your retrieval architecture — when your models depend on Google’s graph to reason correctly — you have coupled your AI system to Google’s platform at the layer where it matters most.

That is not a criticism. It is a structural observation. And it is the exact same structural observation that applies to VAST’s InsightEngine, to any vendor building the semantic intelligence layer above storage.

The question is not whether borrowed judgment is bad. The question is whether the enterprise is borrowing it intentionally — with full understanding of what becomes non-portable — or implicitly, because the platform made it easy and the coupling only becomes visible when you try to leave. If inference quality across multi-cloud data is the binding constraint, borrowing Google’s semantic judgment may be the most rational decision you can make in 2026. Just know what you are signing.

The Borrowed Judgment Framework

Stop evaluating Layer 1A vendors on Layer 1A criteria. They all pass. That game is over.

Evaluate them on borrowed judgment — how much of your AI system’s reasoning intelligence becomes coupled to the vendor, and at which layer.

| AWS S3 Tables | MinIO AIStor | VAST AI OS | Google Knowledge Catalog | |

|---|---|---|---|---|

| Layer 1A | Managed | Enterprise-operated | VAST-managed or enterprise-managed | Substrate-agnostic (via cross-cloud Iceberg) |

| Layer 1B | Managed services (OpenSearch, Bedrock) | Enterprise-built (external vector DB on AIStor) | Native (vectors as first-class data type in DataBase) | Subsumed into the Knowledge Catalog semantic graph |

| Layer 1C | Enterprise-built on AWS services | Enterprise-built | VAST platform (DataEngine, SyncEngine) | Knowledge Catalog (Gemini-powered semantic graph) |

| Layer 2C | Enterprise + AWS services | Enterprise | VAST (PolicyEngine, AgentEngine) | Enterprise + Google agent platform |

| Portability of data | Limited (AWS-native) | Full | Multi-environment | Cross-cloud by design |

| Portability of judgment | Moderate (services are replaceable) | Full (no judgment embedded) | Low (platform absorbs reasoning layer) | Very low (semantic graph is non-portable) |

| Borrowed judgment cost | Moderate | Zero | High | Very high |

| Best fit | Hyperscaler-native workloads | Sovereignty-first, team-rich enterprises | Petabyte-scale integrated platform | Cross-cloud semantic intelligence |

Four coherent models. None is universally correct. Each optimizes for a different organizational reality. And each borrows a different amount of judgment from the vendor.

The Real Evaluation

If your AI workloads live in a hyperscaler and you are not sovereignty-constrained, AWS S3 Tables is the default. Do not fight the default. Spend your decision energy on Layer 1C pipeline quality and Layer 2C policy design.

If you need placement authority, your data is sub-petabyte, and you have the team to build Layers 1C through 2C yourself: MinIO AIStor gives you the cleanest substrate. You own the freedom. You own the work.

If you are operating at petabyte scale, you want an integrated platform, and you are comfortable with vendor authority over Layers 1C through 2C: VAST’s AI OS eliminates integration complexity. Go in with eyes open about what you are delegating.

If your primary constraint is inference quality across multi-cloud data and you need a semantic intelligence layer now: Google’s Knowledge Catalog is the most direct solution to the “right embeddings to the right GPUs” problem. Understand that the semantic graph it builds becomes your most consequential vendor dependency — more consequential than where your data sits.

In all four cases, the same truth applies: the storage layer does not determine whether your AI system hallucinates. The layers above it do. And the layers above it are where borrowed judgment lives.

The Pattern I Keep Seeing

I documented this in the vCTOA migration. I documented it in the AI Failure Mode Map. I documented it in the AI Factory Economics Framework. And I keep seeing it in Buyer Rooms with enterprise CTOs.

Organizations scale the wrong layer. They upgrade the model when the retrieval pipeline is broken. They expand storage when the embedding strategy is wrong. They buy a bigger platform when the metadata discipline is missing. And increasingly, they borrow semantic judgment from a platform vendor without recognizing that the graph — not the data — is where the real coupling happens.

The fix is almost never at Layer 0 or Layer 1A. The fix is almost always at Layer 1C or Layer 2C. The layers nobody wants to own because they require organizational discipline, not procurement.

That is why enterprises keep failing at production AI. Not because the storage is wrong. Not because the model is weak. Because the layers between storage and inference — the layers where data becomes context and context becomes reasoning — are designed implicitly or not designed at all.

Those layers do not show up in a vendor demo. They show up in Year 2, when the system hallucinates on production data and nobody can explain why — and when the enterprise discovers that the judgment they need to fix it belongs to someone else’s platform.

You do not “buy AI” from a vendor. You buy decisions about which layers you own and which layers you let the platform own. That is architecture, not procurement.

And if your organization cannot answer who has decision authority for Layer 1C and Layer 2C, it does not matter which storage vendor you pick. You have already ceded the most important part of your AI system.

Where This Fits

This analysis extends the original MinIO S3-compatible storage stress test I published in January 2026. It evaluates four architectural archetypes, not the full vendor landscape. The borrowed judgment framework applies to any vendor willing to declare which layers they operate and where the enterprise is borrowing judgment — and I welcome that conversation.

The frameworks referenced throughout — the 4+1 AI Infrastructure Model, and the Decision Authority Placement Model — are documented together in 4+1: The Enterprise AI Field Manual, available as a free download.

For vendor teams — storage, platform, and semantic layer alike — if you want to hear how enterprise CTOs are actually making these decisions, what they prioritize, what organizational blockers kill deals before architecture matters, and how they think about borrowed judgment in practice: that is what CTO Advisor Buyer Rooms are designed to surface.

Update – April 29th, 2026: What Happened When I Tried to Buy the Missing Layer

Since writing this, I did what every sane CTO would do: I tried to buy the non-portable layer instead of building it.

Google’s Knowledge Catalog (the evolution of Dataplex’s universal catalog) is exactly the kind of product you’d expect to solve this: an enterprise metadata and context graph that uses Gemini to understand how data across your organization relates to itself and to feed that understanding into agents and models. It’s a managed semantic layer that sits above storage and, in theory, should save you from having to hand‑roll your own graph.

What I discovered is that there are actually three distinct layers in play:

-

Enterprise context graph (Knowledge Catalog). This is Google’s view of your world: datasets, owners, lineage, policies, and cross‑cloud relationships at lake/warehouse scale. It answers “what data assets exist and how do they connect?” and is incredibly valuable for governance and AI assistants that need to navigate enterprise data.

-

Application knowledge graph (mine). This is my view of my world: a small, opinionated graph where nodes are the stable concepts in my frameworks (Layer 2C, DAPM, Fourth Cloud, 4+1) and each node points to the latest post that represents my current position. I ended up backing this with Firestore. Firebase isn’t “the graph product” — it’s just the document database under a knowledge graph pattern I control.

-

Semantic search over content. This is the retrieval layer: time‑scoped semantic search for current events and vendor queries, with full‑corpus fallback for historical questions. It handles the long tail of questions that don’t map cleanly onto a named framework.

In other words, I didn’t get away from building a knowledge graph. I just made it small and owned.

Knowledge Catalog gives me a powerful enterprise context graph, but it doesn’t know which specific blog post best captures my current view on “borrowed judgment” or Layer 2C in the 4+1 model. That’s an application‑level decision, not an enterprise metadata decision. The only way to get that behavior was to implement the graph pattern myself and treat Google’s catalog as one upstream source of truth, not a drop‑in replacement for my own reasoning layer.

The good news is that, with modern code tools, building that app‑level graph turned out to be structurally simple: define the concepts, wire the relationships, and keep the “latest view” pointers fresh as the content library grows. The hard part isn’t the code. The hard part is owning the judgment about which concepts deserve to be first‑class and how much of that judgment you’re willing to borrow from a platform versus encode in your own architecture.

Share This Story, Choose Your Platform!

Keith Townsend is a seasoned technology leader and Founder of The Advisor Bench, specializing in IT infrastructure, cloud technologies, and AI. With expertise spanning cloud, virtualization, networking, and storage, Keith has been a trusted partner in transforming IT operations across industries, including pharmaceuticals, manufacturing, government, software, and financial services.

Keith’s career highlights include leading global initiatives to consolidate multiple data centers, unify disparate IT operations, and modernize mission-critical platforms for “three-letter” federal agencies. His ability to align complex technology solutions with business objectives has made him a sought-after advisor for organizations navigating digital transformation.

A recognized voice in the industry, Keith combines his deep infrastructure knowledge with AI expertise to help enterprises integrate machine learning and AI-driven solutions into their IT strategies. His leadership has extended to designing scalable architectures that support advanced analytics and automation, empowering businesses to unlock new efficiencies and capabilities.

Whether guiding data center modernization, deploying AI solutions, or advising on cloud strategies, Keith brings a unique blend of technical depth and strategic insight to every project.